-

AI-driven drug discovery faces a new crisis: a 99.98% failure rate in generated molecules.…

·

-

AI-generated text in images is now a viable reality with OpenAI’s Images 2.0, collapsing…

·

-

AI-powered employee monitoring at Meta will now capture employee keystrokes to train its Llama…

·

-

AI training with employee data at Meta aims to codify expert engineering workflows, potentially…

·

-

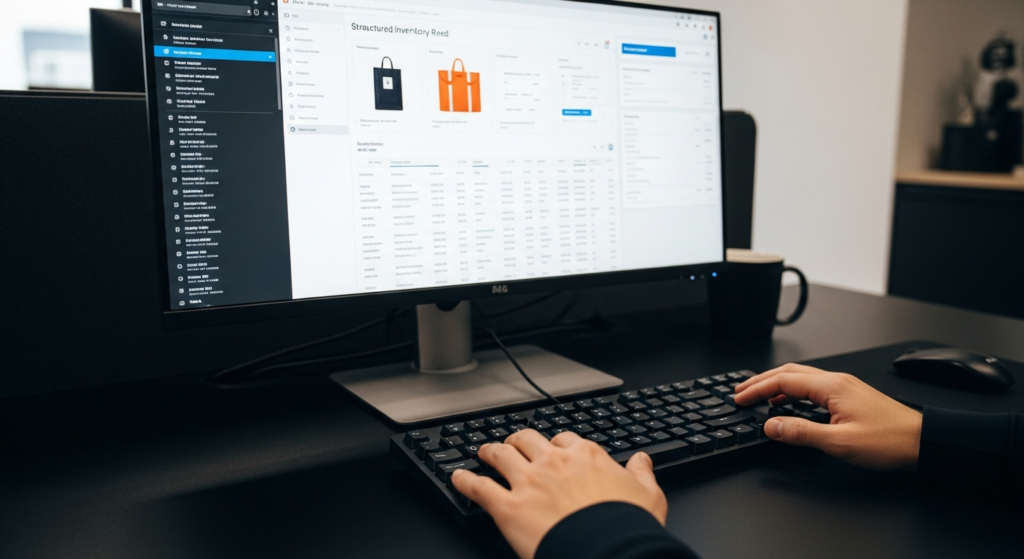

AI-driven local inventory search is now live in Google’s AI Overviews, connecting user queries…

·

-

AI-powered inventory search from Google’s SGE activates its 35 billion product listings to provide…

·

-

Google’s 2026 AI Blueprint Slashes Cloud Costs with On-Device Tools

Google Photos AI tools now offer on-device editing with sub-250ms processing, signaling a major strategic shift in user privacy and edge computing.

-

NSA Deploys Anthropic: A 2026 Blueprint for AI Procurement Success

AI model procurement in national security faces a schism as the NSA reportedly deploys Anthropic’s Mythos, defying a Pentagon feud over AI safety.

-

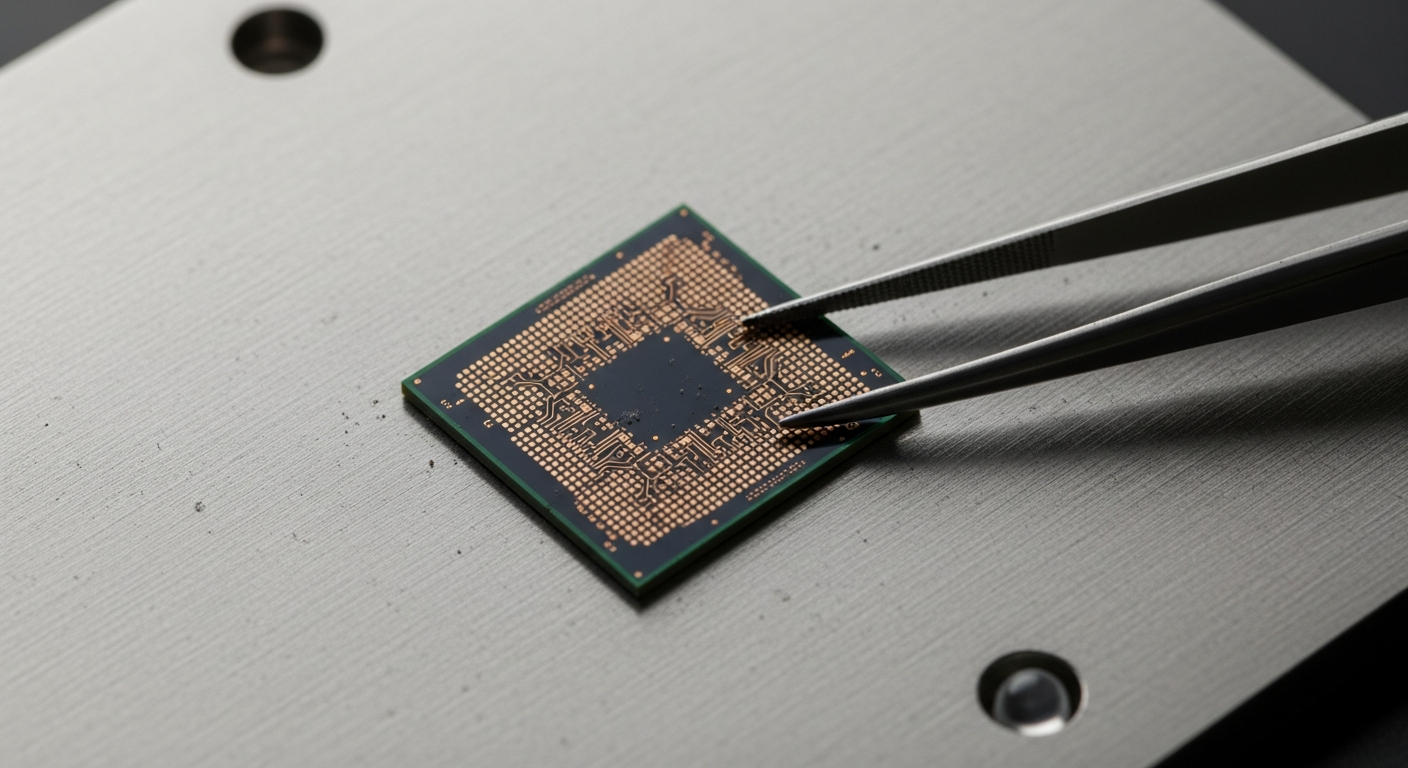

Amazon Trainium chips: Slashes 40% of AI Training Costs for AWS in 2026

Amazon Trainium chips: By enabling a 40% reduction in model training costs, these custom processors are rapidly redefining infrastructure strategy for AI.

-

Amazon Trainium Slashes LLM Costs: A 2024 Blueprint for AI Scale

Amazon Trainium is redefining the economics of AI, delivering a significant reduction in power-per-token costs that is scaling foundational model deployment.

-

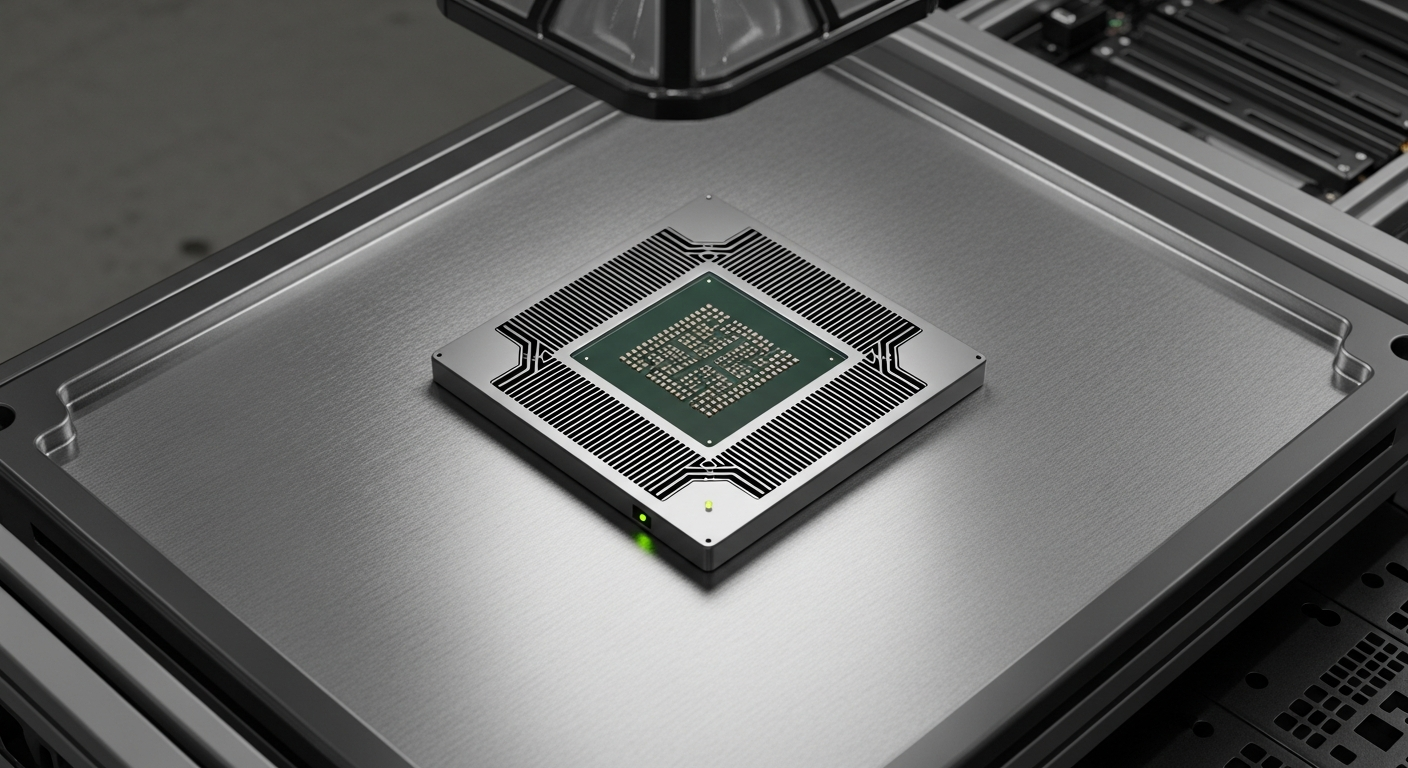

Chip manufacturing plans: Tesla, SpaceX Strategic Blueprint for 2026 Autonomy

Chip Manufacturing Plans: Tesla and SpaceX target vertical integration, projecting a 40% reduction in thermal latency to accelerate autonomous AI systems.